本文采用张正友标定法确认相机的内参,代码环境为Python3.12。

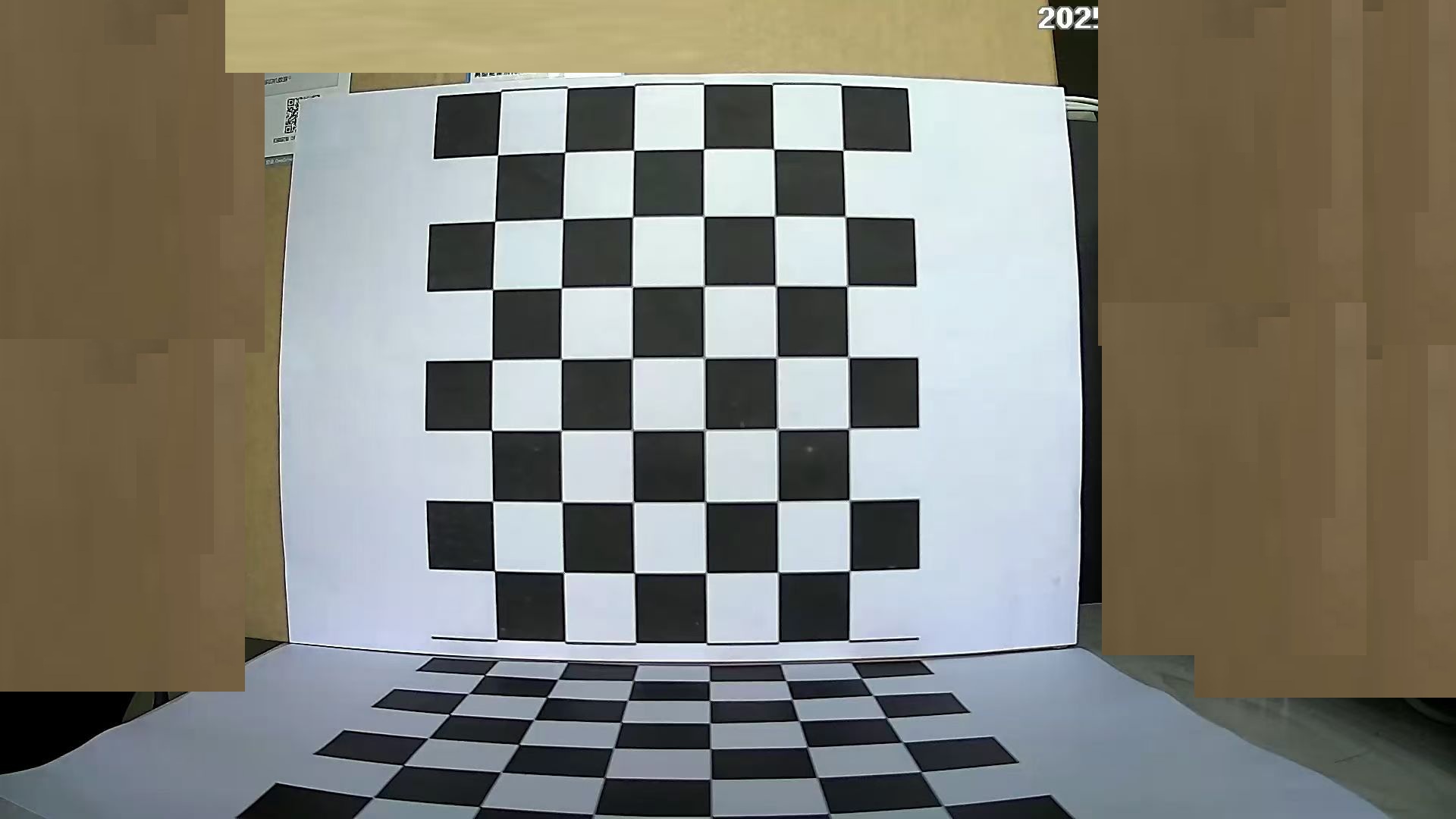

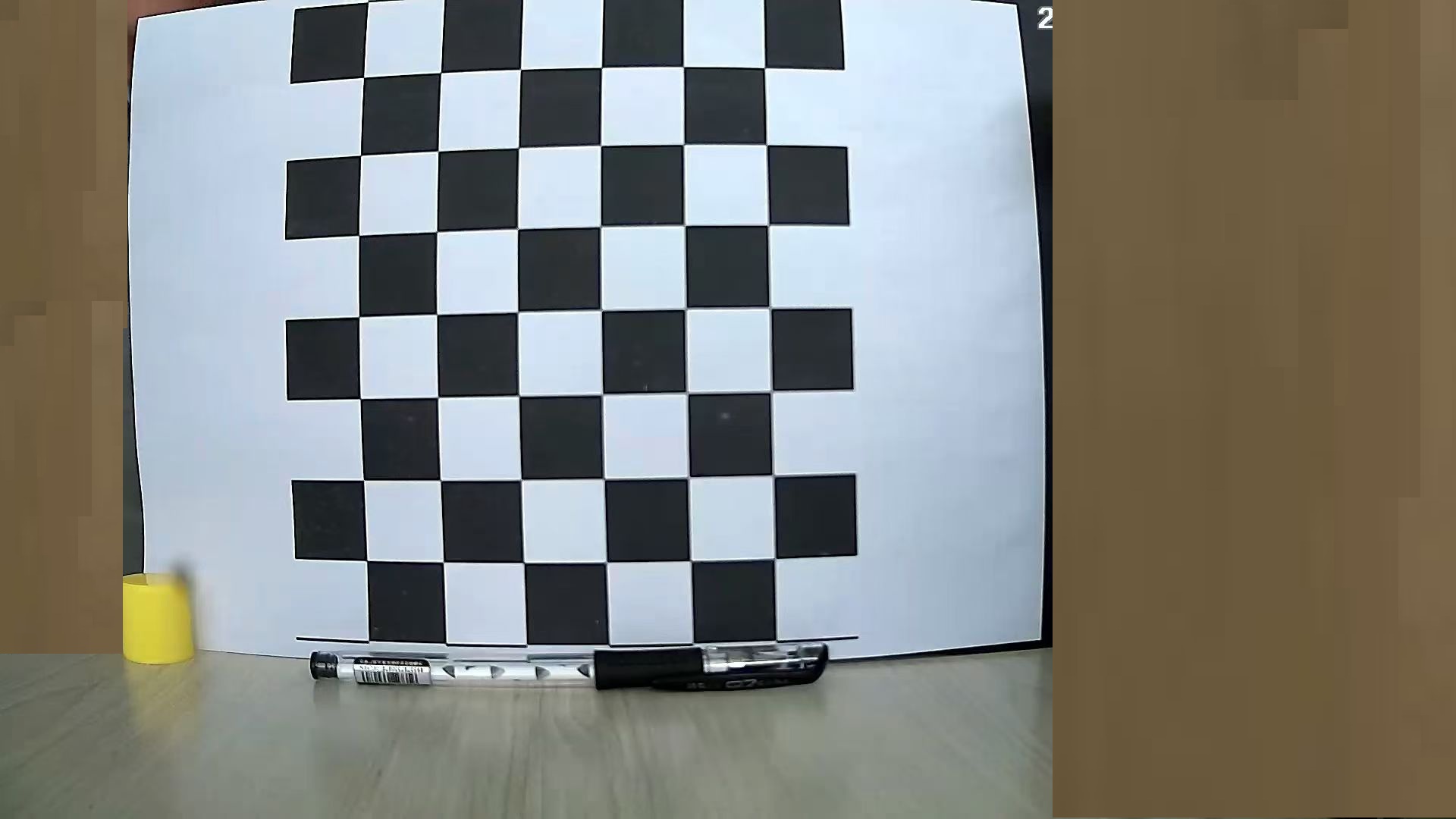

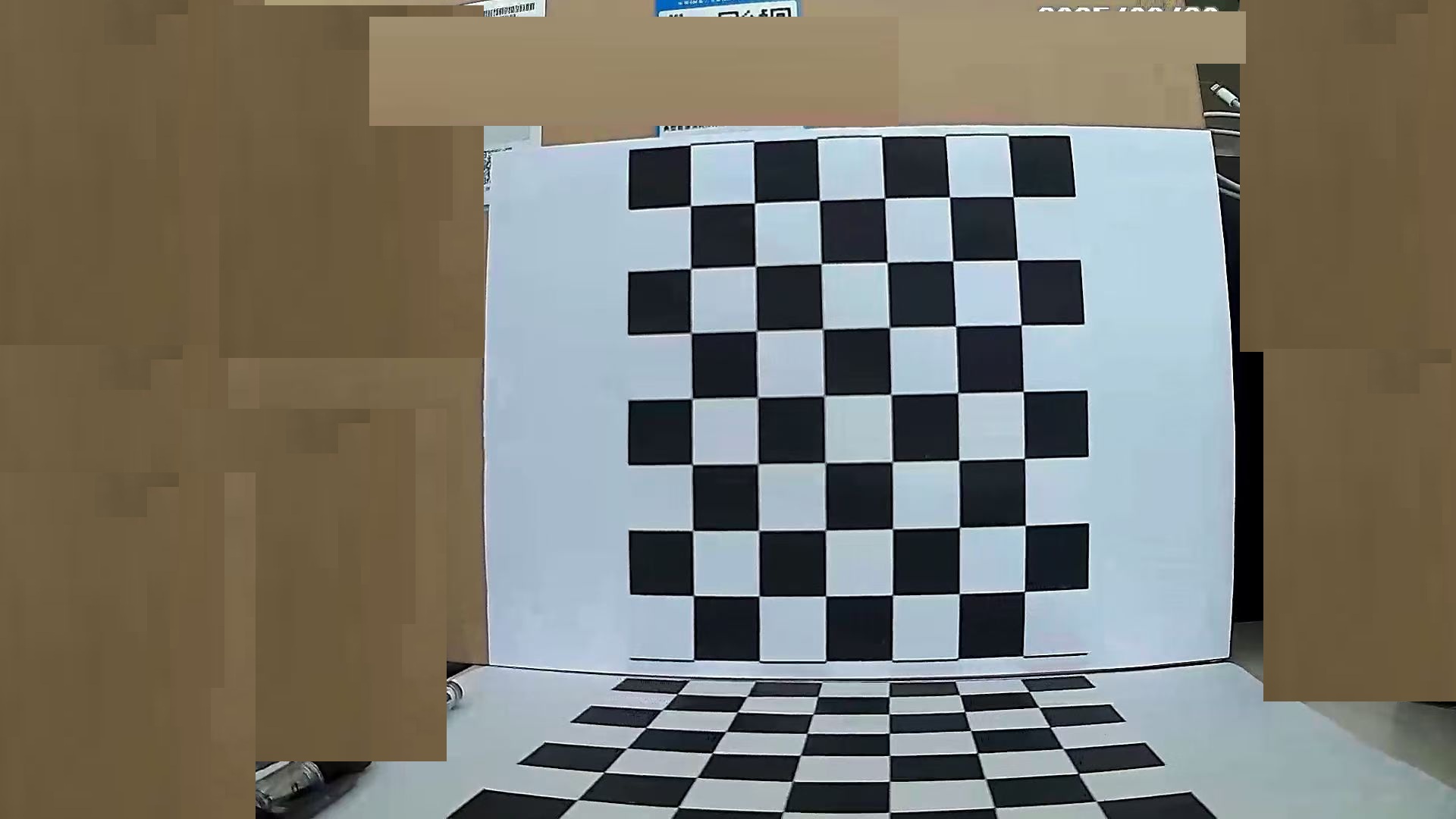

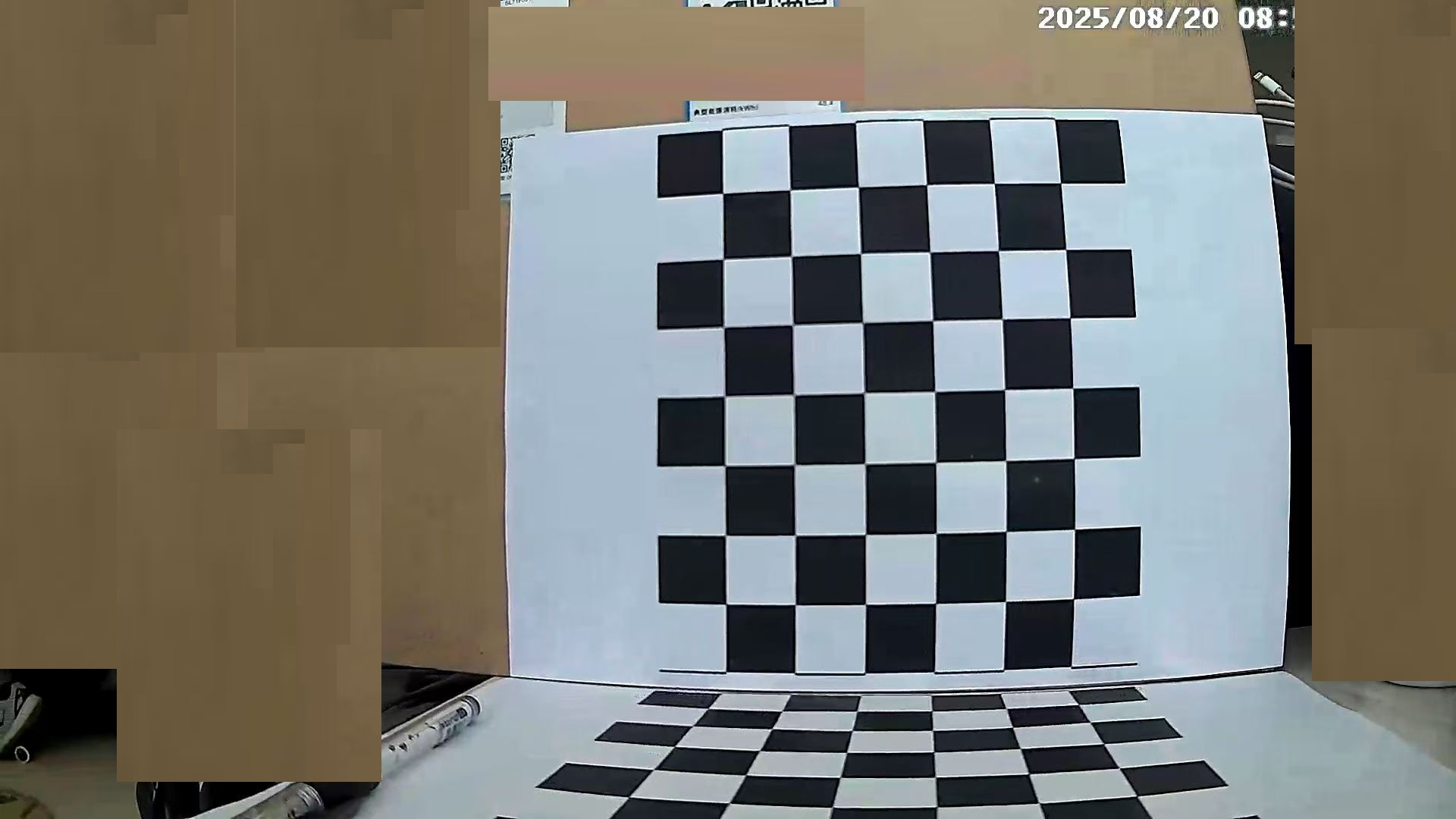

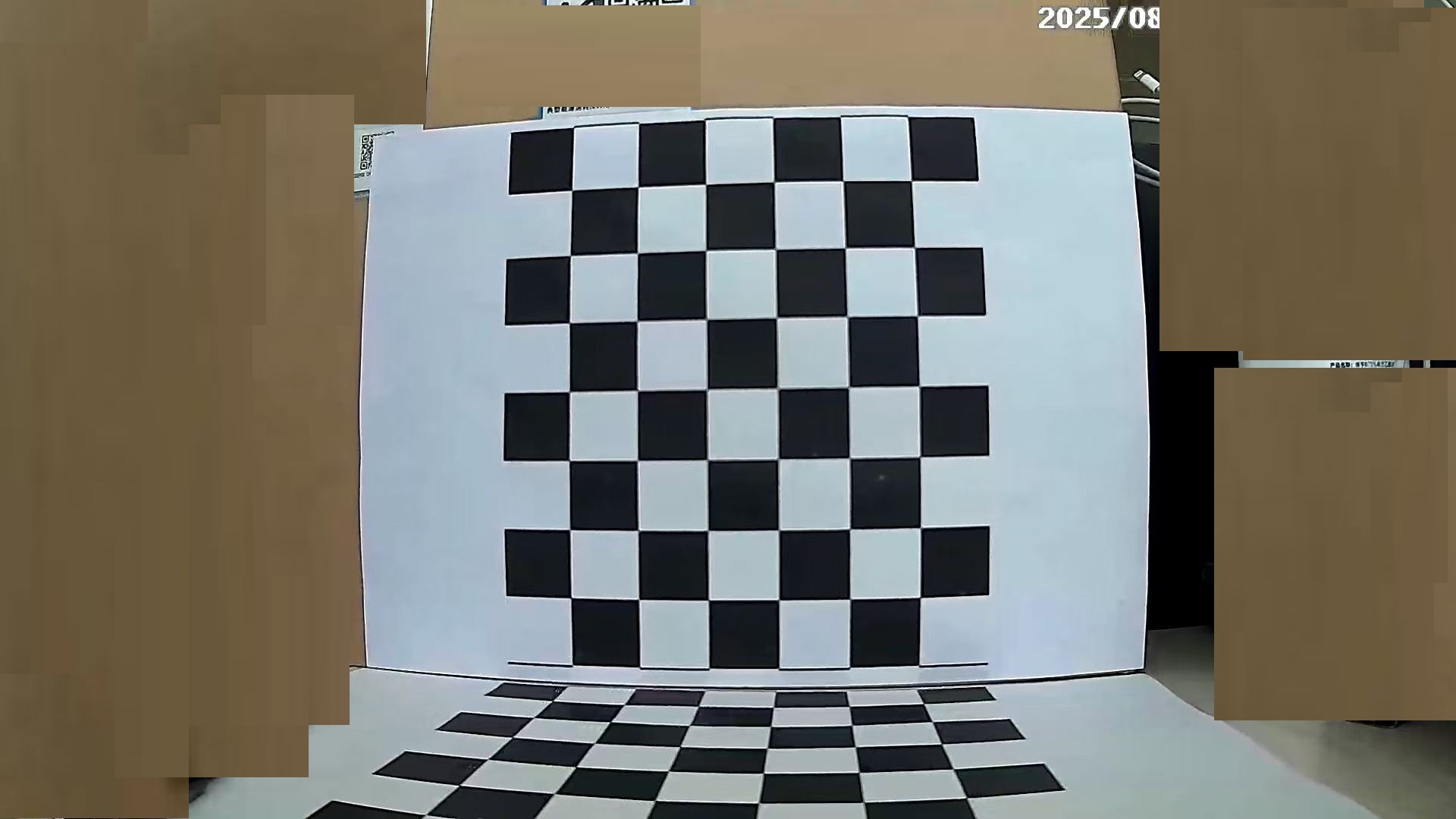

流程简介:1、先用同一套棋盘(内角点尺寸为 5×6、方格边长 25 mm)在相机不同姿态下拍摄多张覆盖视场的平面棋盘图片;

2、对每张图片用 OpenCV 的 findChessboardCorners 检测内角点并用 cornerSubPix 做亚像素精修,按棋盘格的物理坐标(以 0.025 m 为单位)生成对应的三维目标点(所有点都在同一平面上);

3、然后把这些二维角点与对应的三维平面点送入标定算法(OpenCV 的 calibrateCamera 实现了张正友方法的思想:通过每幅图像估计平面对像平面的单应矩阵、由多个单应求解相机内参的线性近似,再用非线性最小二乘对内参、畸变系数及每幅图的外参进行联合优化以最小化重投影误差)。

4、运行结束后你得到了相机内参矩阵(fx, fy, cx, cy)、畸变系数(k1,k2,p1,p2,k3…)、以及每张图的旋转向量和平移向量(tvec 单位为米,因为你用了 0.025 m 的方格边长);并用重投影误差(你得到的平均约 0.0899 px,calibrateCamera 返回 RMS ≈ 0.4966)评估标定精度——误差极小,说明标定质量很好。

最后把结果保存为 camera_calibration_results.npz(并生成 corners_all.csv、可视化图 _corners.jpg / _reproj.jpg),便于后续去畸变、位姿估计(PnP)或把参数导出为 YAML/JSON 在其他程序中复用。相机拍摄技巧:

用A4纸打印2-4张棋盘 chessboard_10x7_A4.pdf 采用阿里网盘保存的,可自行下载。

1、准备阶段: 用需要测量的摄像机拍摄照片,多个角度、方向拍摄。我拍了400多张才有这几张有效。

2、做检测前创建一个Python项目,在Python代码根目录创建一个calib_images目录。这个目录就是用来存放你做检测的图片

3、调用下面这段Python,来测试你相机的内参。

点击查看代码

import cv2 import numpy as np import glob import os import csv from datetime import datetime def calibrate_camera(calib_dir="calib_images", square_size_mm=25.0, min_good_images=5, candidate_cols_range=(4, 10), candidate_rows_range=(3, 8), save_csv="corners_all.csv"): """ 针对用户场景(同一相机、同一棋盘、square_size 已知)做的标定脚本。 - 先尝试在第一张图片上自动检测棋盘尺寸 (CHECKERBOARD),找到后固定该尺寸用于所有图片。 - 打印并保存每张图片的角点像素坐标,保存合并 CSV 供检查。 """ square_size = float(square_size_mm) / 1000.0 # mm -> m if not os.path.exists(calib_dir): print(f"错误:未找到目录 '{calib_dir}',请确认路径。") return # 收集图片 image_formats = ["*.jpg", "*.jpeg", "*.png", "*.bmp"] images = [] for fmt in image_formats: images.extend(sorted(glob.glob(os.path.join(calib_dir, fmt)))) if not images: print(f"错误:目录 '{calib_dir}' 中未找到任何图片。") return print(f"找到 {len(images)} 张图片({calib_dir})。开始检测 — {datetime.now().isoformat()}n") # 候选 pattern 列表(内角点数) candidate_patterns = [(c, r) for c in range(candidate_cols_range[0], candidate_cols_range[1] + 1) for r in range(candidate_rows_range[0], candidate_rows_range[1] + 1)] # 先用第一张图自动识别棋盘尺寸(更稳妥) first_img = cv2.imread(images[0]) first_gray = cv2.cvtColor(first_img, cv2.COLOR_BGR2GRAY) found_pattern = None flags = cv2.CALIB_CB_ADAPTIVE_THRESH | cv2.CALIB_CB_NORMALIZE_IMAGE | cv2.CALIB_CB_FAST_CHECK print("尝试在第一张图片上检测棋盘尺寸 (自动识别)...") for pat in candidate_patterns: ret, _ = cv2.findChessboardCorners(first_gray, pat, flags) if ret: found_pattern = pat print(f"在第一张图片检测到棋盘内角点尺寸: {found_pattern},将其作为全局 CHECKERBOARD。n") break if found_pattern is None: print("警告:第一张图片未能自动识别棋盘尺寸。将遍历候选尺寸寻找第一个能识别的尺寸...") for pat in candidate_patterns: ret, _ = cv2.findChessboardCorners(first_gray, pat, flags) if ret: found_pattern = pat print(f"找到尺寸: {found_pattern}n") break if found_pattern is None: print("注意:未在第一张图片找到可用尺寸。脚本将对每张图片分别尝试候选尺寸(回退策略)。n") # 准备存放点与 CSV 输出 objpoints = [] imgpoints = [] used_image_names = [] detected_patterns = [] csv_rows = [] csv_header = ["image", "pattern_cols", "pattern_rows", "corner_index", "x", "y"] # 检测所有图片 for idx, fname in enumerate(images, start=1): img = cv2.imread(fname) if img is None: print(f"[{idx}/{len(images)}] 警告:无法读取图片 {os.path.basename(fname)},跳过") continue gray = cv2.cvtColor(img, cv2.COLOR_BGR2GRAY) success = False tried_patterns = [] patterns_to_try = [found_pattern] if found_pattern is not None else candidate_patterns # 若 found_pattern 不为 None,优先尝试它;若失败再回退到所有 candidate_patterns for pat in patterns_to_try: if pat is None: continue tried_patterns.append(pat) ret, corners = cv2.findChessboardCorners(gray, pat, flags) if ret: # 亚像素精修 criteria = (cv2.TERM_CRITERIA_EPS + cv2.TERM_CRITERIA_MAX_ITER, 30, 0.001) corners2 = cv2.cornerSubPix(gray, corners, (11, 11), (-1, -1), criteria) cols, rows = pat objp = np.zeros((rows * cols, 3), np.float32) objp[:, :2] = np.mgrid[0:cols, 0:rows].T.reshape(-1, 2) objp *= square_size objpoints.append(objp) imgpoints.append(corners2) used_image_names.append(os.path.basename(fname)) detected_patterns.append(pat) # 保存带角点图 vis = img.copy() cv2.drawChessboardCorners(vis, pat, corners2, ret) result_path = os.path.splitext(fname)[0] + "_corners.jpg" cv2.imwrite(result_path, vis) # 打印并记录坐标 corners_xy = corners2.reshape(-1, 2) print(f"[{idx}/{len(images)}] {os.path.basename(fname)}: 检测成功, pattern={pat}, corners={len(corners_xy)}") per_line = 8 coord_strs = [f"({x:.3f}, {y:.3f})" for x, y in corners_xy] for i in range(0, len(coord_strs), per_line): print(" " + " ".join(coord_strs[i:i+per_line])) print(f" 结果图已保存: {os.path.basename(result_path)}n") # 写入 CSV 行 for i_pt, (x, y) in enumerate(corners_xy): csv_rows.append([os.path.basename(fname), cols, rows, i_pt, f"{x:.6f}", f"{y:.6f}"]) success = True break # 找到 pattern 则跳出 pattern 尝试 if not success: # 如果之前只尝试了 found_pattern,而没有成功,回退尝试所有 candidate_patterns if found_pattern is not None: for pat in candidate_patterns: if pat in tried_patterns: continue ret, corners = cv2.findChessboardCorners(gray, pat, flags) if ret: criteria = (cv2.TERM_CRITERIA_EPS + cv2.TERM_CRITERIA_MAX_ITER, 30, 0.001) corners2 = cv2.cornerSubPix(gray, corners, (11, 11), (-1, -1), criteria) cols, rows = pat objp = np.zeros((rows * cols, 3), np.float32) objp[:, :2] = np.mgrid[0:cols, 0:rows].T.reshape(-1, 2) objp *= square_size objpoints.append(objp) imgpoints.append(corners2) used_image_names.append(os.path.basename(fname)) detected_patterns.append(pat) vis = img.copy() cv2.drawChessboardCorners(vis, pat, corners2, ret) result_path = os.path.splitext(fname)[0] + "_corners.jpg" cv2.imwrite(result_path, vis) corners_xy = corners2.reshape(-1, 2) print(f"[{idx}/{len(images)}] {os.path.basename(fname)}: 检测成功 (回退), pattern={pat}, corners={len(corners_xy)}") coord_strs = [f"({x:.3f}, {y:.3f})" for x, y in corners_xy] for i in range(0, len(coord_strs), 8): print(" " + " ".join(coord_strs[i:i+8])) print(f" 结果图已保存: {os.path.basename(result_path)}n") for i_pt, (x, y) in enumerate(corners_xy): csv_rows.append([os.path.basename(fname), cols, rows, i_pt, f"{x:.6f}", f"{y:.6f}"]) success = True break if not success: print(f"[{idx}/{len(images)}] {os.path.basename(fname)}: 未检测到角点,已跳过n") # 保存 CSV(所有角点) if csv_rows: with open(save_csv, "w", newline='', encoding='utf-8') as f: writer = csv.writer(f) writer.writerow(csv_header) writer.writerows(csv_rows) print(f"所有角点坐标已合并保存为: {save_csv}n") else: print("未检测到任何角点,CSV 未生成。") good_n = len(objpoints) print(f"成功检测到 {good_n} 张有效图片(用于标定)。需要至少 {min_good_images} 张。") if good_n < min_good_images: print("有效图片不足,无法进行可靠标定。请检查图片质量或拍摄更多不同视角的棋盘照片。") return # image_size 使用第一张图片(因为你确认所有图片相同分辨率) sample_img = cv2.imread(images[0]) image_size = (sample_img.shape[1], sample_img.shape[0]) # (width, height) # 标定 print("开始标定(calibrateCamera)...") rms, camera_matrix, dist_coeffs, rvecs, tvecs = cv2.calibrateCamera(objpoints, imgpoints, image_size, None, None) print("n===== 标定结果 =====") print(f"calibrateCamera 返回 RMS: {rms:.6f}") print("相机内参 (camera_matrix):") print(camera_matrix) print("n畸变系数 (dist_coeffs):") print(dist_coeffs.ravel()) # 平均重投影误差 total_error = 0.0 for i in range(len(objpoints)): imgpoints2, _ = cv2.projectPoints(objpoints[i], rvecs[i], tvecs[i], camera_matrix, dist_coeffs) error = cv2.norm(imgpoints[i], imgpoints2, cv2.NORM_L2) / len(imgpoints2) total_error += error mean_error = total_error / len(objpoints) print(f"n平均重投影误差: {mean_error:.6f} 像素") # 详细打印内参与畸变 fx = camera_matrix[0, 0]; fy = camera_matrix[1, 1]; cx = camera_matrix[0, 2]; cy = camera_matrix[1, 2] print("n相机内参 (详细):") print(f" fx = {fx:.6f}") print(f" fy = {fy:.6f}") print(f" cx = {cx:.6f}") print(f" cy = {cy:.6f}") coeffs = dist_coeffs.ravel() # 可能只有 4 或 5 个畸变参数 coeffs_str = ", ".join([f"{v:.8f}" for v in coeffs]) print(f"n畸变系数: {coeffs_str}") # 保存结果 np.savez("camera_calibration_results.npz", camera_matrix=camera_matrix, dist_coeffs=dist_coeffs, rvecs=rvecs, tvecs=tvecs, mean_reprojection_error=mean_error, used_image_names=np.array(used_image_names), detected_patterns=np.array(detected_patterns), square_size_m=square_size) print("n标定数据已保存: camera_calibration_results.npz") print("完成。") if __name__ == "__main__": calibrate_camera() 4、最后会把输出内容:

1). 结果打印出来;

2). 生成每个图片内角点标注的图片文件 图片名_corners.jpg

3). 输出一个 camera_calibration_results.npz 文件;

4). 以及 corners_all.csv文件。

5、如有些看不懂,则用下面这段代码转换下。即可分为机器与人都能看懂的文件。代码如下:

点击查看代码

#!/usr/bin/env python3 # -*- coding: utf-8 -*- """ print_calib_readable.py 从 camera_calibration_results.npz 读取标定结果, 打印易读报告,并同时生成: - calibration_readable.txt (人类可读) - calibration_summary.json (机器可读) - per_image_reprojection.csv (若 corners_all.csv 存在且可计算逐图误差) 修复 ValueError 的关键点:不把 numpy array 当作布尔值直接判断,而是检查 data.files 中是否包含字段。 """ import numpy as np import cv2 import os import csv import json from math import sqrt NPZ_FILE = "camera_calibration_results.npz" CORNERS_CSV = "corners_all.csv" READABLE_TXT = "calibration_readable.txt" SUMMARY_JSON = "calibration_summary.json" PER_IMAGE_CSV = "per_image_reprojection.csv" def load_npz(fn): if not os.path.exists(fn): raise FileNotFoundError(f"未找到文件: {fn}") return np.load(fn, allow_pickle=True) def to_str_list(arr): """把 numpy array/iterable 转为 str 列表,兼容 bytes""" if arr is None: return None out = [] try: for v in arr: if isinstance(v, bytes): out.append(v.decode('utf-8', errors='ignore')) else: out.append(str(v)) except Exception: # fallback: single value try: if isinstance(arr, bytes): return [arr.decode('utf-8', errors='ignore')] return [str(arr)] except Exception: return None return out def read_corners_csv(csv_path): """读取 corners_all.csv (image, pattern_cols, pattern_rows, corner_index, x, y)""" if not os.path.exists(csv_path): return None d = {} with open(csv_path, newline='', encoding='utf-8') as f: reader = csv.DictReader(f) for row in reader: name = row['image'] x = float(row['x']); y = float(row['y']) idx = int(row['corner_index']) d.setdefault(name, []).append((idx, (x, y))) # sort by index and convert to np.array for name in list(d.keys()): arr = [p for i,p in sorted(d[name], key=lambda it: it[0])] d[name] = np.array(arr, dtype=np.float32) return d def format_mat(mat): return "n".join([" [" + " ".join(f"{v:12.6f}" for v in row) + "]" for row in mat]) def safe_get(data, *keys): """从 data (npz) 中按优先级取值,返回 None 或 value""" for k in keys: if k in data.files: return data[k] return None def ensure_list_of_vectors(arr): """保证 rvecs/tvecs 转为 python list,元素为 np.array""" if arr is None: return None try: return [np.array(v) for v in arr] except Exception: # 如果是单个 array 扩展为 list try: return [np.array(arr)] except Exception: return None def main(): try: data = load_npz(NPZ_FILE) except Exception as e: print("加载 NPZ 失败:", e) return # 安全地获取字段(不直接用 data.get(...) 或在 if 中用数组判断) camera_matrix = safe_get(data, "camera_matrix", "mtx", "camera_mat", "camera_matx") dist_coeffs = safe_get(data, "dist_coeffs", "dist_coeff", "dist", "dist_coeff") rvecs = safe_get(data, "rvecs") tvecs = safe_get(data, "tvecs") mean_err = safe_get(data, "mean_reprojection_error", "mean_error") used_images_raw = safe_get(data, "used_image_names") detected_patterns_raw = safe_get(data, "detected_patterns") square_size_m_val = safe_get(data, "square_size_m") rms_val = safe_get(data, "rms", "calibrate_rms") # optional # normalize some fields used_images = to_str_list(used_images_raw) if used_images_raw is not None else None # detected_patterns may be array-like of tuples or strings; normalize to list of (cols,rows) tuples detected_patterns = None if detected_patterns_raw is not None: try: # try to convert to list of tuples tmp = [] for p in detected_patterns_raw: # p could be array([c,r]) or b'(c,r)' if isinstance(p, (np.ndarray, list, tuple)): tmp.append((int(p[0]), int(p[1]))) else: # try parse s = str(p) s = s.strip("()[] ") parts = [int(x) for x in s.replace(",", " ").split() if x.strip().isdigit()] if len(parts) >= 2: tmp.append((parts[0], parts[1])) detected_patterns = tmp except Exception: detected_patterns = None # convert rvecs/tvecs to python lists rvecs_list = ensure_list_of_vectors(rvecs) tvecs_list = ensure_list_of_vectors(tvecs) # start composing readable text and summary dict lines = [] lines.append("===== Camera Calibration (Human-Readable) =====n") if camera_matrix is None: lines.append("错误:NPZ 文件中未找到相机内参 (camera_matrix)。n") # write file and exit with open(READABLE_TXT, "w", encoding="utf-8") as f: f.write("n".join(lines)) print("已生成 (部分) 可读文件:", READABLE_TXT) return # print camera matrix lines.append("相机内参 (camera_matrix) — 3x3 矩阵 (单位: 像素):") lines.append(format_mat(camera_matrix)) fx = float(camera_matrix[0,0]); fy = float(camera_matrix[1,1]); cx = float(camera_matrix[0,2]); cy = float(camera_matrix[1,2]) skew = float(camera_matrix[0,1]) if camera_matrix.shape[1] > 1 else 0.0 lines.append("n标准参数名称与数值:") lines.append(f" fx (焦距 x方向) = {fx:.6f} px") lines.append(f" fy (焦距 y方向) = {fy:.6f} px") lines.append(f" cx (主点 x) = {cx:.6f} px") lines.append(f" cy (主点 y) = {cy:.6f} px") lines.append(f" skew (相机倾斜/skew) = {skew:.6f} (通常接近 0)n") # distortion if dist_coeffs is None: lines.append("畸变系数未找到 (dist_coeffs)。n") dist_list = [] else: d = np.array(dist_coeffs).ravel() lines.append("畸变系数 (distortion coefficients):") labels = ["k1","k2","p1","p2","k3","k4","k5","k6"] for i,val in enumerate(d): lab = labels[i] if i < len(labels) else f"d{i+1}" lines.append(f" {lab:3s} = {float(val):.8f}") lines.append("(顺序通常为 k1, k2, p1, p2, k3 )n") dist_list = [float(x) for x in d.tolist()] # RMS and mean reprojection if rms_val is not None: try: rms_f = float(rms_val) lines.append(f"calibrateCamera 返回 RMS (函数返回值) = {rms_f:.6f}") except Exception: pass if mean_err is not None: try: mean_err_f = float(mean_err) lines.append(f"mean_reprojection_error (按图片平均计算) = {mean_err_f:.6f} 像素") except Exception: pass lines.append("(推荐关注按图片平均的 mean_reprojection_error,更直观)n") if square_size_m_val is not None: try: ss = float(square_size_m_val) lines.append(f"棋盘方格实际边长 (square_size_m) = {ss:.6f} m") except Exception: pass # patterns & images if detected_patterns is not None: unique = [] for p in detected_patterns: if p not in unique: unique.append(p) if len(unique) == 1: lines.append(f"使用的棋盘内角点尺寸 (pattern cols,rows) = {unique[0]} (一致,所有图片使用同一尺寸)") common_pattern = unique[0] else: lines.append("每张图片检测到的棋盘内角点尺寸 (per-image):") for i, p in enumerate(detected_patterns): name = used_images[i] if used_images and i < len(used_images) else f"img#{i}" lines.append(f" {name:20s} -> {p}") common_pattern = None else: common_pattern = None if used_images is not None: lines.append(f"n用于标定的图片数量 = {len(used_images)}") lines.append("图片列表(用于标定,按序):") for nm in used_images: lines.append(" - " + nm) lines.append("") # write readable txt now (we will append per-image detail later) with open(READABLE_TXT, "w", encoding="utf-8") as f: f.write("n".join(lines)) print("已生成可读文本报告:", READABLE_TXT) # 准备 summary json summary = { "fx": fx, "fy": fy, "cx": cx, "cy": cy, "skew": skew, "distortion": {f"k{i+1}": (dist_list[i] if i < len(dist_list) else None) for i in range(6)}, "distortion_raw": dist_list, "rms": float(rms_val) if rms_val is not None else None, "mean_reprojection_error": float(mean_err) if mean_err is not None else None, "square_size_m": float(square_size_m_val) if square_size_m_val is not None else None, "pattern_common": common_pattern, "images_used": used_images if used_images is not None else [] } # 如果存在 corners_all.csv,则计算逐图重投影误差并写入 CSV 与 JSON corners_dict = read_corners_csv(CORNERS_CSV) per_image_stats = [] if corners_dict is None: print(f"未找到 {CORNERS_CSV}(可选)。若存在该文件,脚本会计算逐图重投影误差及 rvec/tvec。") else: # ensure rvecs/tvecs exist if rvecs_list is None or tvecs_list is None: print("注意:NPZ 中缺少 rvecs/tvecs,无法计算逐图重投影误差。") else: # iterate using used_images order if available names_order = used_images if used_images is not None else sorted(list(corners_dict.keys())) # prepare CSV writer with open(PER_IMAGE_CSV, "w", newline='', encoding='utf-8') as fcsv: writer = csv.writer(fcsv) writer.writerow(["image", "pattern_cols", "pattern_rows", "corner_count", "rmse_px", "tvec_x_m", "tvec_y_m", "tvec_z_m", "rvec_x", "rvec_y", "rvec_z"]) for i, name in enumerate(names_order): base = os.path.basename(name) if base not in corners_dict: # try direct name (maybe used_images stores base names already) if name in corners_dict: base = name else: print(f"{base}: 在 {CORNERS_CSV} 中未找到对应角点,跳过逐图误差计算。") continue img_corners = corners_dict[base] if i >= len(rvecs_list) or i >= len(tvecs_list): print(f"{base}: rvecs/tvecs 不足,跳过。") continue rvec = rvecs_list[i].reshape(3,1) tvec = tvecs_list[i].reshape(3,1) # construct objp based on detected_patterns if available else skip if detected_patterns is None or i >= len(detected_patterns): print(f"{base}: 无法构造 objpoints(未保存 detected_patterns),跳过该图重投影计算。") continue pat = detected_patterns[i] cols, rows = pat objp = np.zeros((rows*cols, 3), np.float32) objp[:,:2] = np.mgrid[0:cols, 0:rows].T.reshape(-1,2) if square_size_m_val is not None: objp[:,:2] *= float(square_size_m_val) # project proj, _ = cv2.projectPoints(objp, rvec, tvec, camera_matrix, dist_coeffs) proj = proj.reshape(-1,2) if proj.shape != img_corners.shape: print(f"{base}: 投影点数量 {proj.shape[0]} 与 CSV 中角点数量 {img_corners.shape[0]} 不匹配,跳过。") continue err = np.sqrt(np.mean(np.sum((img_corners - proj)**2, axis=1))) R, _ = cv2.Rodrigues(rvec) writer.writerow([base, cols, rows, int(img_corners.shape[0]), float(err), float(tvec[0,0]), float(tvec[1,0]), float(tvec[2,0]), float(rvec[0,0]), float(rvec[1,0]), float(rvec[2,0])]) per_image_stats.append({ "image": base, "pattern": [int(cols), int(rows)], "corner_count": int(img_corners.shape[0]), "rmse_px": float(err), "tvec_m": [float(tvec[0,0]), float(tvec[1,0]), float(tvec[2,0])], "rvec": [float(rvec[0,0]), float(rvec[1,0]), float(rvec[2,0])] }) print("已生成逐图重投影 CSV:", PER_IMAGE_CSV) summary["per_image"] = per_image_stats # 写入 summary json with open(SUMMARY_JSON, "w", encoding='utf-8') as fj: json.dump(summary, fj, indent=2, ensure_ascii=False) print("已生成机器可读摘要:", SUMMARY_JSON) # append per-image human-readable section to readable txt with open(READABLE_TXT, "a", encoding='utf-8') as f: f.write("nn===== Per-image Reprojection Summary =====nn") if not per_image_stats: f.write("无可用的逐图重投影结果(可能缺少 corners_all.csv 或 rvecs/tvecs/detected_patterns)。n") else: for s in per_image_stats: f.write(f"Image: {s['image']}n") f.write(f" pattern (cols,rows) = {s['pattern']} ; corner_count = {s['corner_count']}n") f.write(f" reprojection RMSE = {s['rmse_px']:.6f} pxn") f.write(f" tvec (m) = [{s['tvec_m'][0]:.6f}, {s['tvec_m'][1]:.6f}, {s['tvec_m'][2]:.6f}]n") f.write(f" rvec = [{s['rvec'][0]:.6f}, {s['rvec'][1]:.6f}, {s['rvec'][2]:.6f}]nn") print("已把逐图结果追加到可读报告:", READABLE_TXT) print("n全部完成。") if __name__ == "__main__": main()

![洛谷 P11345 [KTSC 2023 R2] 基地简化 题解](http://www.itfaba.com/wp-content/themes/kemi/timthumb.php?src=http://www.itfaba.com/wp-content/themes/kemi/img/random/2.jpg&w=218&h=124&zc=1)